Automatic Migrations for ClickHouse with Atlas

ClickHouse, one of the prominent columnar databases, is designed for real-time analytics, providing exceptional speed and efficiency in handling large datasets.

Many teams adopt ClickHouse for its speed and cost-efficiency only to discover that the day 2 operations of managing its schema can be a puzzle. Sure, if your schema contains a handful of tables that rarely change, you’re probably not going to feel much of this pain. But for mission-critical applications, managing complex and interconnected schemas while moving fast without breaking things becomes difficult.

Enter: Atlas

Atlas helps developers manage their database schema as code - abstracting away the intricacies of database schema management. With Atlas, users provide the desired state of the database schema and Atlas automatically plans the required migrations.

In this guide, we will dive into setting up Atlas for ClickHouse, and introduce the different workflows available.

Prerequisites

- Docker

- Atlas installed on your machine:

- macOS + Linux

- Homebrew

- Docker

- Windows

- Manual Installation

To download and install the latest release of the Atlas CLI, simply run the following in your terminal:

curl -sSf https://atlasgo.sh | sh

Get the latest release with Homebrew:

brew install ariga/tap/atlas

To pull the Atlas image and run it as a Docker container:

docker pull arigaio/atlas

docker run --rm arigaio/atlas --help

If the container needs access to the host network or a local directory, use the --net=host flag and mount the desired

directory:

docker run --rm --net=host \

-v $(pwd)/migrations:/migrations \

arigaio/atlas migrate apply

--url "mysql://root:pass@:3306/test"

Download the latest release and move the atlas binary to a file location on your system PATH.

Logging in to Atlas

To use ClickHouse with Atlas, you'll need to log in to Atlas. If it's your first time, you will be prompted to create both an account and a workspace (organization):

- Via Web

- Via Token

- Via Environment Variable

atlas login

atlas login --token "ATLAS_TOKEN"

ATLAS_TOKEN="ATLAS_TOKEN" atlas login

Inspecting our Database

Let's start off by spinning up a database using Docker:

docker run --rm -d --name atlas-demo -e CLICKHOUSE_DB=demo -p 9000:9000 clickhouse/clickhouse-server:latest

If you are already using ClickHouse Cloud and want to inspect it, you can work with Atlas through Url and utilize it throughout the guide.

clickhouse://user:pass@CLICKHOUSE-CLOUD-HOST:9440/schema?secure=true

For this example we will begin with a minimal database with a users table and an id as the primary key.

CREATE TABLE `users` (

`id` UInt64,

`name` String NOT NULL,

PRIMARY KEY (`id`)

) ENGINE = MergeTree() ORDER BY id;

To create the table above on our local database, we can run the following command:

docker exec -it atlas-demo clickhouse-client --query 'CREATE TABLE demo.users (id UInt64, name String NOT NULL, PRIMARY KEY (id)) ENGINE = MergeTree() ORDER BY id'

The atlas schema inspect command supports reading the database description provided by a URL and outputting it in

different formats, including Atlas DDL (default), SQL, and JSON. In this guide, we will

demonstrate the flow using both the Atlas DDL and SQL formats, as the JSON format is often used for processing the

output using jq.

- Atlas DDL (HCL)

- SQL

To inspect our locally-running ClickHouse instance, use the -u flag and write the output to a file named schema.hcl:

atlas schema inspect -u "clickhouse://localhost:9000/demo" > schema.hcl

Open the schema.hcl file to view the Atlas schema that describes our database.

table "users" {

schema = schema.demo

engine = MergeTree

column "id" {

null = false

type = UInt64

}

column "name" {

null = false

type = String

}

primary_key {

columns = [column.id]

}

}

schema "demo" {

engine = sql("Memory")

}

This first block represents a table resource with id, and name

columns. The schema field references the demo schema that is defined in the block below. In addition, the primary_key

sub-block defines the id column as the primary key for the table. Atlas strives to mimic the syntax of the database

that the user is working against. In this case, the type for the id column is UInt64, and String for the name column.

To inspect our locally-running ClickHouse instance, use the -u flag and write the output to a file named schema.sql:

atlas schema inspect -u "clickhouse://localhost:9000/demo" --format '{{ sql . }}' > schema.sql

Open the schema.sql file to view the inspected SQL schema that describes our database.

CREATE TABLE `users` (

`id` UInt64,

`name` String)

ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

For in-depth details on the atlas schema inspect command, covering aspects like inspecting specific schemas,

handling multiple schemas concurrently, excluding tables, and more, refer to our documentation

here.

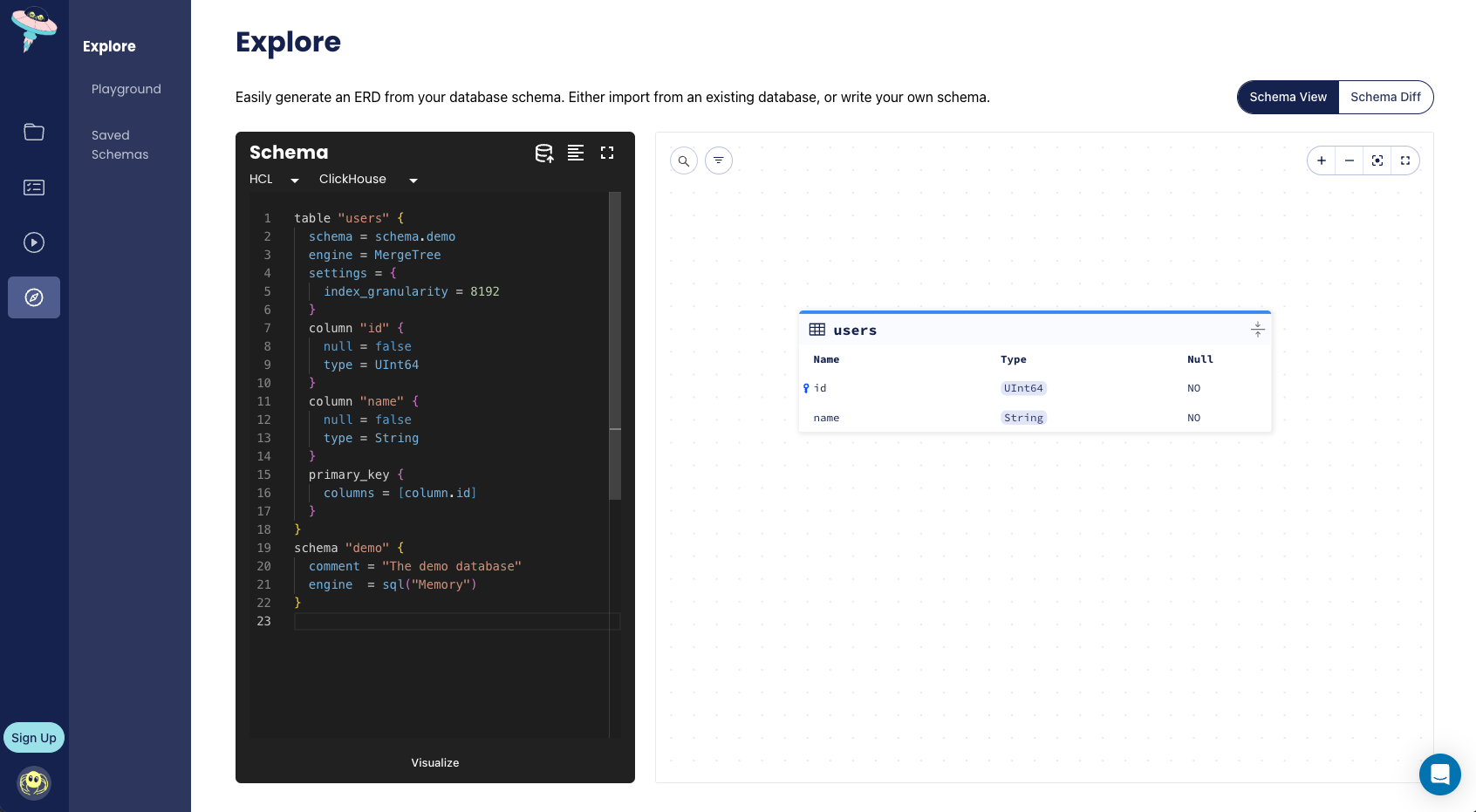

To generate an Entity Relationship Diagram (ERD), or a visual representation of our schema, we can add the -w flag

to the inspect command:

atlas schema inspect -u "clickhouse://localhost:9000/demo" -w

Declarative Migrations

The declarative approach lets users manage schemas by defining the desired state of the database as code. Atlas then inspects the target database and calculates an execution plan to reconcile the difference between the desired and actual states. Let's see this in action.

We will start off by making a change to our schema file, such as adding a repos table:

- Atlas DDL (HCL)

- SQL

table "users" {

schema = schema.demo

engine = MergeTree

column "id" {

null = false

type = UInt64

}

column "name" {

null = false

type = String

}

primary_key {

columns = [column.id]

}

}

table "repos" {

schema = schema.demo

engine = MergeTree

column "id" {

type = UInt64

null = false

}

column "name" {

type = String

null = false

}

column "owner_id" {

type = Bigint

null = false

}

primary_key {

columns = [column.id]

}

}

schema "demo" {

engine = sql("Memory")

}

-- Create "users" table

CREATE TABLE `users` (

`id` UInt64,

`name` String)

ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

-- Create "repos" table

CREATE TABLE `repos` (

`id` UInt64,

`name` String,

`owner_id` Int64)

ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

Now that our desired state has changed, to apply these changes to our database, Atlas will plan a migration for us

by running the atlas schema apply command:

- Atlas DDL (HCL)

- SQL

atlas schema apply \

-u "clickhouse://localhost:9000/demo" \

--to file://schema.hcl \

--dev-url "docker://clickhouse/23.11/demo"

atlas schema apply \

-u "clickhouse://localhost:9000/demo" \

--to file://schema.sql \

--dev-url "docker://clickhouse/23.11/demo"

Apply the changes, and that's it! You have successfully run a declarative migration.

For a more detailed description of the atlas schema apply command refer to our documentation

here.

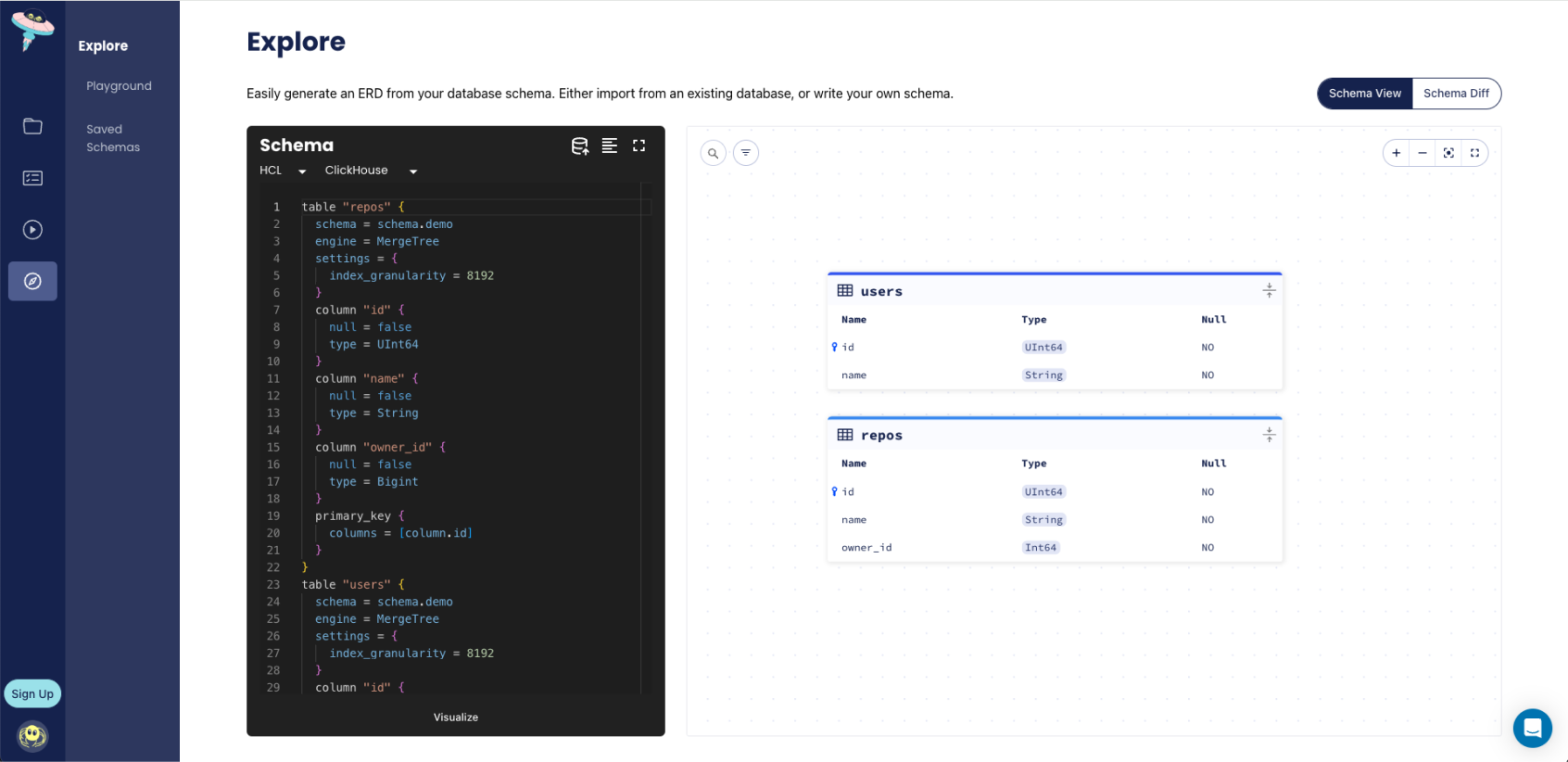

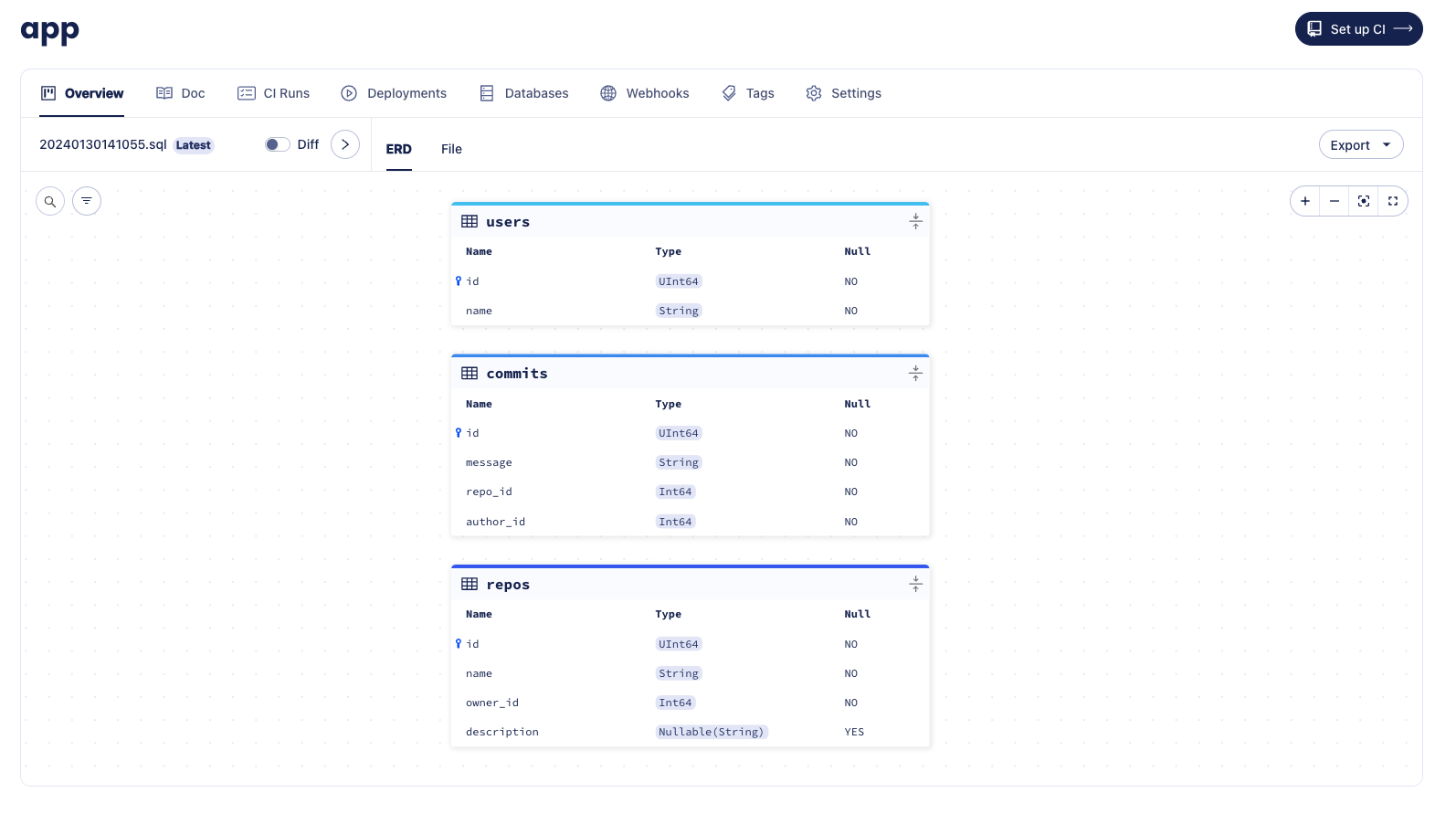

To ensure that the changes have been made to the schema, let's run the inspect command with the -w flag once more

and view the ERD:

Versioned Migrations

Alternatively, the versioned migration workflow, sometimes called "change-based migrations", allows each change to the database schema to be checked-in to source control and reviewed during code-review. Users can still benefit from Atlas intelligently planning migrations for them, however they are not automatically applied.

Creating the first migration

In the versioned migration workflow, our database state is managed by a migration directory. The migration directory holds all of the migration files created by Atlas, and the sum of all files in lexicographical order represents the current state of the database.

To create our first migration file, we will run the atlas migrate diff command, and we will provide the necessary parameters:

--dirthe URL to the migration directory, by default it is file://migrations.--tothe URL of the desired state. A state can be specified using a database URL, HCL or SQL schema, or another migration directory.--dev-urla URL to a Dev Database that will be used to compute the diff.

- Atlas DDL (HCL)

- SQL

atlas migrate diff initial \

--to file://schema.hcl \

--dev-url "docker://clickhouse/23.11/dev"

atlas migrate diff initial \

--to file://schema.sql \

--dev-url "docker://clickhouse/23.11/dev"

Run ls migrations, and you'll notice that Atlas has automatically created a migration directory for us, as well as

two files:

- 20240130122951_initial.sql

- atlas.sum

-- Create "users" table

CREATE TABLE `users` (

`id` UInt64,

`name` String)

ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

-- Create "repos" table

CREATE TABLE `repos` (

`id` UInt64,

`name` String,

`owner_id` Int64)

ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

h1:NrYfakYn4W3xiS9VqcP98sqvgLUPn9pJpxFndh1GWsQ=

20240130122951.sql h1:R+eBw2nOk2+VLBvJ/dn49OphxzfxoWEBUxAy4Zp3VAE=

The migration file represents the current state of our database, and the sum file is used by Atlas to maintain the integrity of the migration directory. To learn more about the sum file, read the documentation.

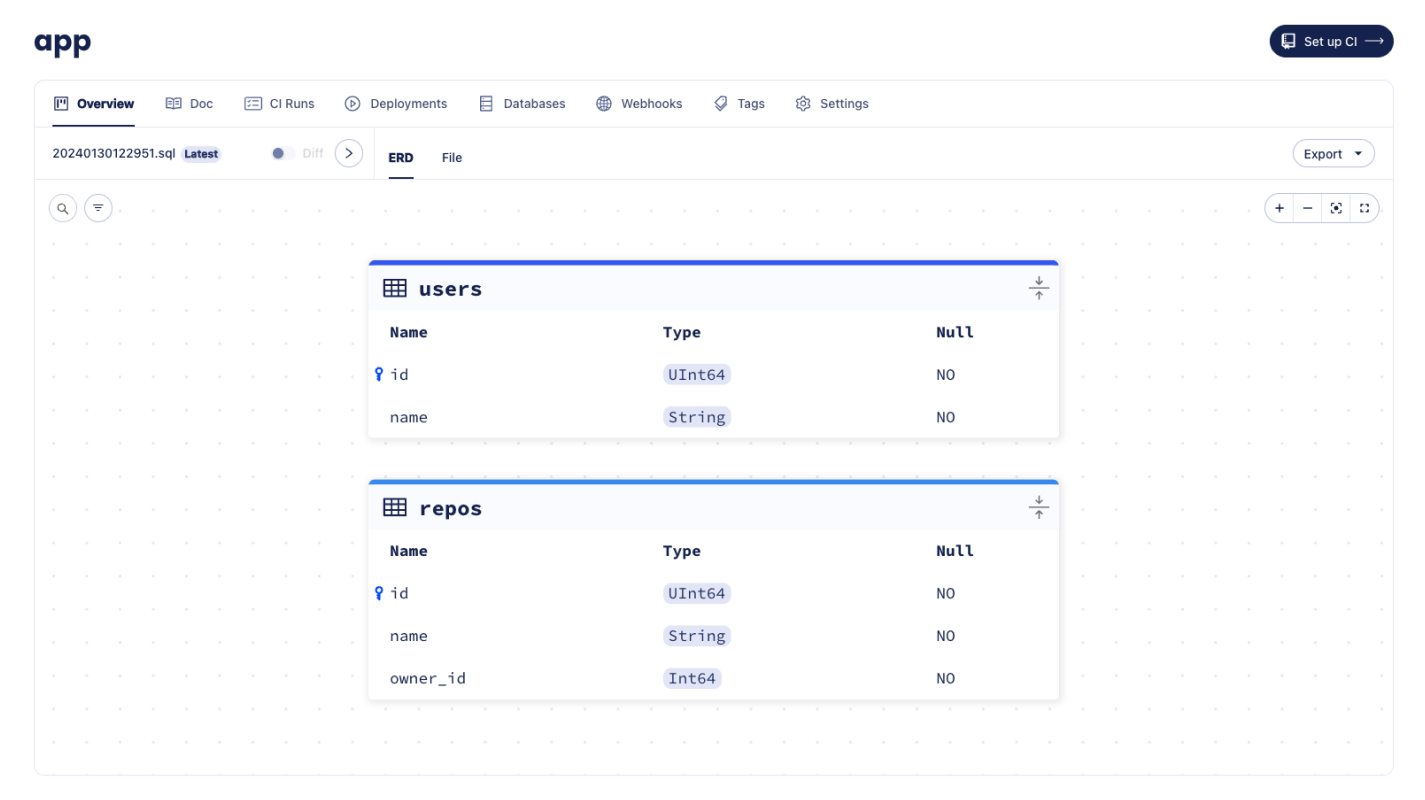

Pushing migration directories to Atlas

Now that we have our first migration, we can apply it to a database. There are multiple ways to accomplish this, with most methods covered in the guides section. In this example, we'll demonstrate how to push migrations to Atlas Cloud, much like how Docker images are pushed to Docker Hub.

Migration Directory created with atlas migrate push

Let's name our new migration project app and run atlas migrate push:

atlas migrate push app \

--dev-url "docker://clickhouse/23.11"

Once the migration directory is pushed, Atlas prints a URL to the created directory, similar to the once shown in the image above.

Applying migrations

Once our app migration directory has been pushed, we can apply it to a database from any CD platform without

necessarily having our directory there.

We'll create a simple Atlas configuration file (atlas.hcl) to store the settings for our local environment:

# The "dev" environment represents our local testings.

env "local" {

url = "clickhouse://localhost:9000/example"

migration {

dir = "atlas://app"

}

}

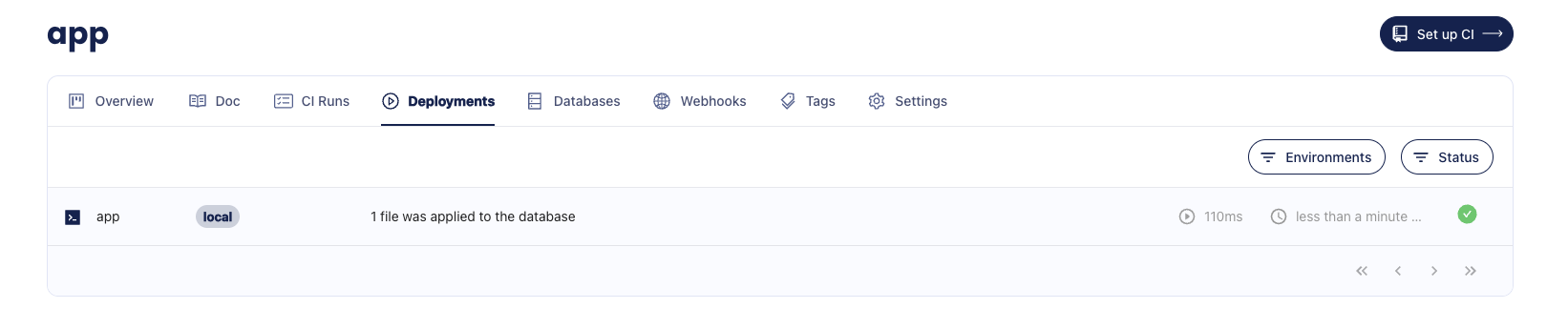

The final step is to apply the migrations to the database. Let's run atlas migrate apply with the --env flag

to instruct Atlas to select the environment configuration from the atlas.hcl file:

atlas migrate apply --env local

Boom! After applying the migration, you should receive a link to the deployment and the database where the migration was applied. Here's an example of what it should look like:

Migration deployment reported created with atlas migrate apply

Generating another migration

After applying the first migration, it's time to update our schema defined in the schema file and tell Atlas to generate another migration. This will bring the migration directory (and the database) in line with the new state defined by the desired schema (schema file).

Let's make two changes to our schema:

- Add a new

descriptioncolumn to our repos table - Add a new

commitstable

- Atlas DDL (HCL)

- SQL

table "users" {

schema = schema.demo

engine = MergeTree

column "id" {

null = false

type = UInt64

}

column "name" {

null = false

type = String

}

primary_key {

columns = [column.id]

}

}

table "repos" {

schema = schema.demo

engine = MergeTree

column "id" {

type = UInt64

null = false

}

column "name" {

type = String

null = false

}

column "description" {

type = sql("Nullable(String)")

null = true

}

column "owner_id" {

type = Bigint

null = false

}

primary_key {

columns = [column.id]

}

}

table "commits" {

schema = schema.demo

engine = MergeTree

column "id" {

type = UInt64

null = false

}

column "message" {

type = String

null = false

}

column "repo_id" {

type = Bigint

null = false

}

column "author_id" {

type = Bigint

null = false

}

primary_key {

columns = [column.id]

}

}

schema "demo" {

engine = sql("Memory")

}

CREATE TABLE `users` (

`id` UInt64,

`name` String NOT NULL

) ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

CREATE TABLE `repos` (

`id` UInt64,

`name` String NOT NULL,

`description` String NULL,

`owner_id` Bigint NOT NULL

) ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;;

CREATE TABLE `commits` (

`id` UInt64,

`message` String NOT NULL,

`repo_id` Bigint NOT NULL,

`author_id` Bigint NOT NULL,

) ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

Next, let's run the atlas migrate diff command once more:

- Atlas DDL (HCL)

- SQL

atlas migrate diff add_commits \

--to file://schema.hcl \

--dev-url "docker://clickhouse/23.11/dev"

atlas migrate diff add_commits \

--to file://schema.sql \

--dev-url "docker://clickhouse/23.11/dev"

Run ls migrations, and you'll notice that a new migration file has been generated.

ALTER TABLE `repos` ADD COLUMN `description` Nullable(String);

-- Create "commits" table

CREATE TABLE `commits` (

`id` UInt64,

`message` String,

`repo_id` Int64,

`author_id` Int64

) ENGINE = MergeTree

PRIMARY KEY (`id`)

SETTINGS index_granularity = 8192;

Let's run atlas migrate push again and

observe the new file on the migration directory page.

atlas migrate push app \

--dev-url "docker://clickhouse/23.11/dev"

Migration Directory created with atlas migrate push

Next Steps

In this guide we learned about the declarative and versioned workflows, and how to use Atlas to generate migrations, push them to an Atlas workspace and apply them to databases.

Next steps:

- Read the full docs to learn HCL schema syntax or about specific ClickHouse column types

- Learn how to set up CI for your migration directory

- Deploy schema changes with Terraform or Kubernetes

- Learn about modern CI/CD principles for databases

For more in-depth guides, check out the other pages in this section or visit our Docs section.

Have questions? Feedback? Find our team on our Discord server.